Introduction to Emotion Recognition in Mobile Apps

Why Emotion Recognition in Apps Feels So Revolutionary

Imagine your phone tuning into your mood as effortlessly as a close friend. That’s the magic of emotion recognition technology. It’s not just about detecting if you’re happy, sad, or stressed—it’s about creating mobile apps that truly “get” you. Whether it’s a fitness app adjusting your routines based on frustration levels or a meditation app offering extra calming exercises after sensing your anxiety, the possibilities feel almost limitless.

This isn’t science fiction; it’s happening now. By analyzing subtle cues like facial expressions, voice tones, or even typing speed, apps can decode emotions in real time. Think about how this could revolutionize customer service apps, where an automated assistant shifts tone based on your mood. Or picture gaming apps altering difficulty levels when they notice signs of player burnout. These are no longer futuristic ideas—they’re knocking at the door.

- Facial recognition that catches fleeting smiles or frowns through selfies.

- Voice analysis detecting stress or excitement in your tone during calls.

- Behavioral tracking noticing changes in typing or scrolling patterns.

The result? Mobile experiences that are not only smarter but emotionally in sync with you. Talk about next-level personalization!

The Emotional Connection Between Users and AI

It’s fascinating how emotion recognition transforms the way we interact with tech. Why do we connect so deeply with certain apps? Part of it lies in their ability to reflect our needs back at us in ways that feel eerily human. Imagine receiving supportive messages from a mental health app exactly when you’re feeling low, without having to say a word. That’s because the app “knows.” And that connection isn’t just helpful—it’s profoundly comforting.

Developers are tapping into these capabilities to craft human-centered designs. By integrating trust-building features, like transparent consent options or letting users adjust sensitivity for emotion tracking, they ensure the technology feels empowering rather than invasive. After all, when an app respects your emotions, it earns your loyalty.

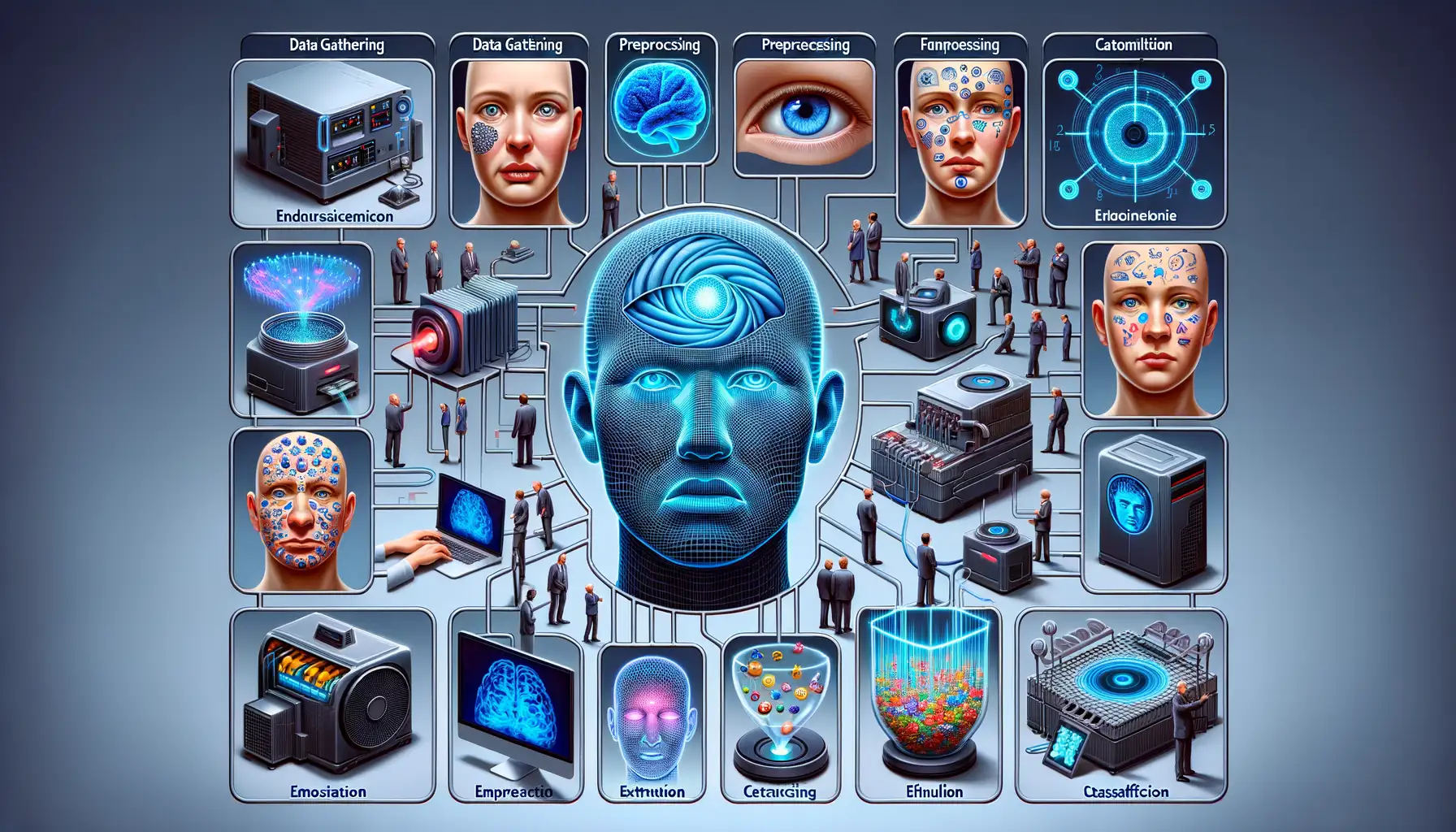

Role of AI in Emotion Detection and Analysis

How AI Understands Human Emotions

Imagine your phone could read between the lines of what you’re feeling—almost like a close friend who just gets you. That’s precisely what AI in emotion detection achieves. By combining cutting-edge tech with a dash of empathy, AI analyzes subtle cues like tone of voice, facial expressions, or even the way you type. It’s not just data; it’s decoding human essence.

Thanks to machine learning, these systems are trained on mountains of examples—think millions of smiles, frowns, and everything in between—to recognize patterns. The result? AI can distinguish a sarcastic “I’m fine” from a cheerful one. How cool is that?

But let’s break it down further with examples:

- Facial Recognition: AI detects microexpressions, those fleeting changes in your face you barely notice yourself.

- Text Sentiment Analysis: Analyzing words, emojis, and even punctuation to determine if your message screams joy or whispers frustration.

The Magic Ingredient: Context

AI gets smarter because it understands context. For example, a smile at a surprise party means happiness, but a similar smile during an uncomfortable work meeting could mean something entirely different. Without context, it’s just guesswork. Context is the unsung hero of emotion analysis—it connects dots humans naturally piece together. Through smart algorithms, AI starts tuning into these nuances, personalizing its responses and insights.

Key Technologies and Algorithms for Emotion Recognition

Transformative Technologies Powering Emotion-Sensing Magic

When it comes to weaving technology with human emotion, cutting-edge tools and algorithms work their behind-the-scenes magic. The heart of this tech marvel lies in robust frameworks like deep learning neural networks, which process subtle nuances in expressions, voice intonations, and even text tone like a seasoned empath. These aren’t your average algorithms—they’re the Sherlock Holmes of AI, sniffing out every emotional clue.

There’s also no escaping the influence of computer vision. Picture this: your app uses facial feature tracking to interpret a fleeting furrowed brow or a radiant smile. It’s all made possible by convolutional neural networks (CNNs) designed specifically to analyze microexpressions. They turn a quick glance into a treasure chest of insights.

- Natural Language Processing (NLP): When analyzing text—be it a heartfelt message or a snarky tweet—advanced NLP models read the emotional undertones like a novel they can’t put down.

- Speech Analysis: Ever noticed how someone’s voice trembles when anxious? Speech sentiment analysis deciphers tones, pauses, and even pitch variations with surgical precision.

These technologies may be complex, but their mission is simple: decoding emotions like a digital mind-reader.

Challenges and Considerations in Developing Emotion Recognition Features

Complexities in Understanding Human Emotions

Developing an app that can truly grasp emotions? That’s no walk in the park. Humans are gloriously unpredictable, and their emotions don’t always play by the rules. Think about it: a smile might mean happiness or hide frustration; a neutral face could mask deep sadness. Teaching a machine to interpret such nuances is like asking it to read poetry—there’s more between the lines than on them.

One major hurdle lies in ensuring accuracy across diverse populations. An algorithm trained on one cultural group might completely misread another. For instance, eye contact can signify confidence in some cultures and discomfort in others. How do we make AI as fluent in these subtleties as a socially skilled human?

And then there’s the dreaded issue of bias. Datasets, no matter how massive, often reflect the imperfections of the real world. If the training data skews toward certain expressions, it risks leaving others misunderstood—or worse, ignored entirely.

- Privacy concerns: Users expect emotional intelligence, but not at the cost of intrusive data collection.

- Real-time processing: Apps must analyze emotions without lagging, which demands lightning-fast algorithms.

The Fine Line Between Helpfulness and Intrusion

Let’s get real: while users appreciate apps that “get them,” no one wants to feel like Big Brother is dissecting their every frown. Striking that balance is crucial. Imagine a fitness app encouraging you to exercise when it sees you’re stressed—it’s great in theory, but feels invasive if mishandled.

Transparency is your secret weapon. Users need to know exactly what’s happening under the hood: How is the app analyzing them? What data is being stored? With clear communication, you can turn suspicion into trust, creating technologies that feel genuinely comforting, not creepy.

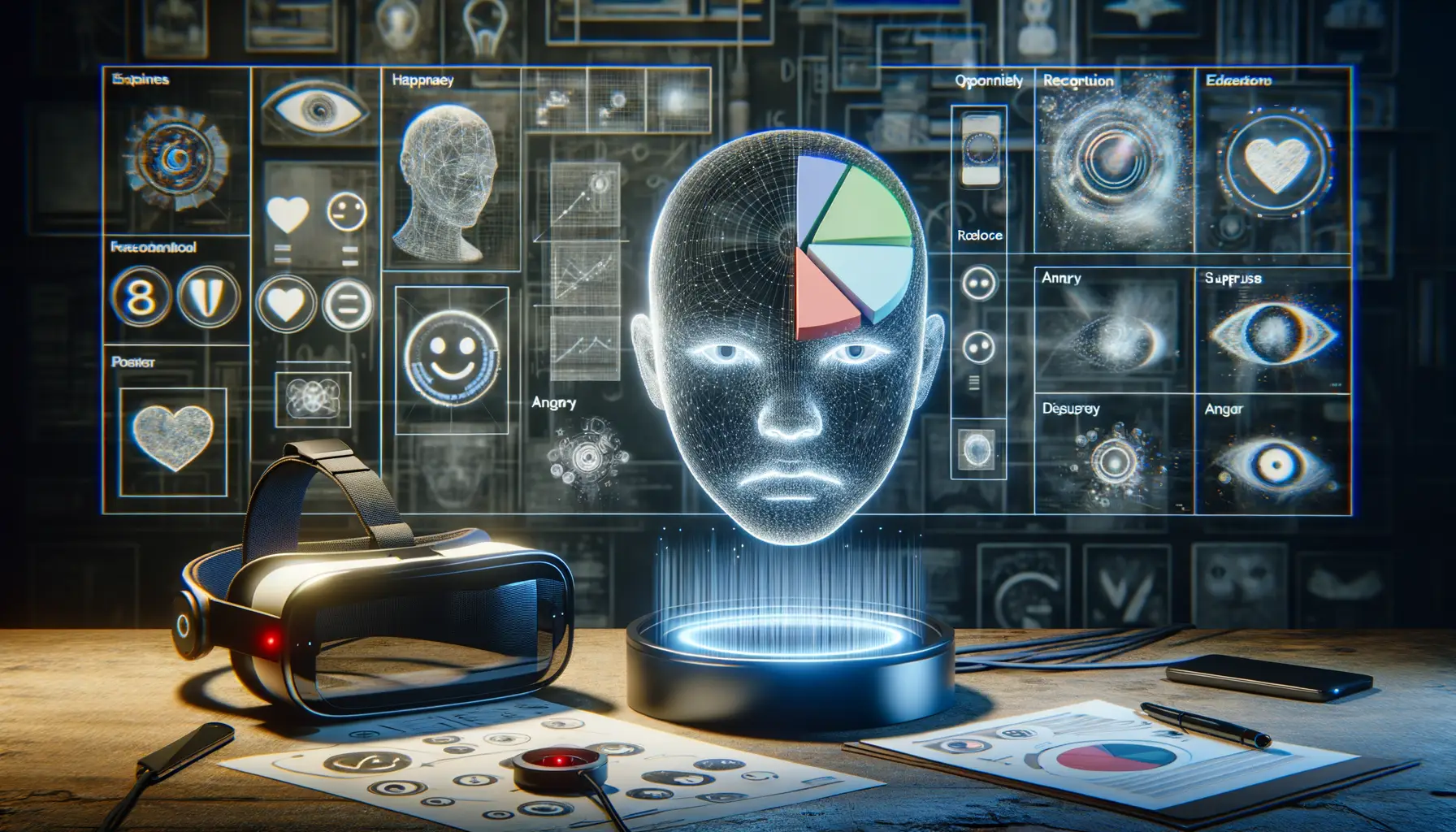

Future Trends and Opportunities in Emotion Recognition Technology

The Rise of Emotion-Aware Experiences

Imagine a world where your mobile app feels like it truly *gets* you. That’s not science fiction anymore—it’s where we’re headed with emotion recognition technology. Thanks to advancements in AI, apps are shifting from being tools to becoming empathetic companions.

What does this mean? Picture a fitness app that detects frustration in your tone during a workout and switches to an encouraging trainer voice. Or a music app that scans your face for signs of sadness and curates a playlist to lift your spirits. These aren’t just features; they’re experiences that resonate on a deeper level.

The opportunities here are vast:

- Personalized mental health support: Apps could monitor emotional shifts and recommend mindfulness exercises or breathing techniques in real-time.

- Smarter customer interactions: E-commerce platforms might adjust their UX based on inferred user moods, making shopping more delightful.

- Inclusive communication: Assistive tech could help neurodiverse users by translating subtle emotional cues into clearer signals.

Breaking Boundaries with AI Integration

What sets the future apart is how seamlessly AI integrates into our lives. Combining emotion detection with wearables, for instance, opens doors to unimaginable possibilities. Your smartwatch could pick up stress through tiny changes in your heartbeat, syncing with your phone to suggest a calming walk—or even scheduling one automatically.

The beauty of these technologies lies in their potential to make our digital interactions more *human*. The apps of tomorrow won’t just work for you; they’ll work *with* you, creating a kind of bond that feels almost magical. This isn’t just innovation—it’s empathy encoded into every line of code.